Information theory was founded in 1948 by the mathematician Claude Shannon in his "A Mathematical Theory of Communications". In it, he strives to find fundamental limits on signal processing operations in order to facilitate reliable storage, compression and communication of data. This is clearly a huge concern, as all computing requires movement of data to work. The central obstacle to this goal is noisy channels of communication.

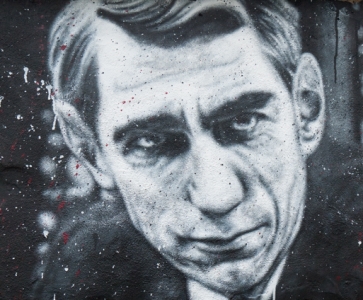

Photograph of Claude Shannon taken by Alfred Eisenstaedt, painted on a wall in France's Abode of Chaos contemporary art museum.

Image Source: thierry ehrmann / Flickr.com.

Transmission over such channels is an engineering problem at the heart of information theory and it led to the very important source coding theorem. This theorem states that, on average, the number of bits needed to store or communicate one symbol is determinable by its entropy. The coding theory that sprung from this centers around the effort to find explicit methods ("codes") that are efficient enough to reduce the net error rate of signal transmission over a noisy channel. The closer to that channel's fundamental maximum limit as described by information theory, the more efficient that transmission is and the closer that channel is to being used to its maximum potential. These codes come in two forms:

- data compression, which works to remove errors in the initial 'packaging' of the data for sending, and

- error-correction, which strives to put right errors that result from the sending itself (see the Error Detection and Correction section for more)